Apple Plans a Siri Camera Mode and Upgraded Visual AI in iOS 27: A New Frontier for Smartphones

The tech world is constantly abuzz with rumors, but when Bloomberg reports on Apple’s long-term software roadmap, it’s time to pay attention. Recent whispers-and industry analysis-point toward a monumental shift in how we interact with our devices. With the horizon set on iOS 27, Apple is reportedly developing a sophisticated Siri Camera Mode and a significantly upgraded visual AI integration that could redefine mobile photography and augmented reality (AR) forever.

In this deep dive,we explore what these potential changes mean for your daily digital life,why visual artificial intelligence is the next battleground for tech giants,and how Apple intends to integrate these features into the heart of the iPhone experience.

The Evolution of Siri: From Voice Commands to Visual Intelligence

For years, Siri has been primarily an auditory companion. You ask a question; she replies. However, the limitation of a voice-only interface is becoming increasingly apparent in an era where generative AI and multimodal models are becoming the standard. To remain competitive, Apple is shifting its focus toward a proactive, “seeing” AI.

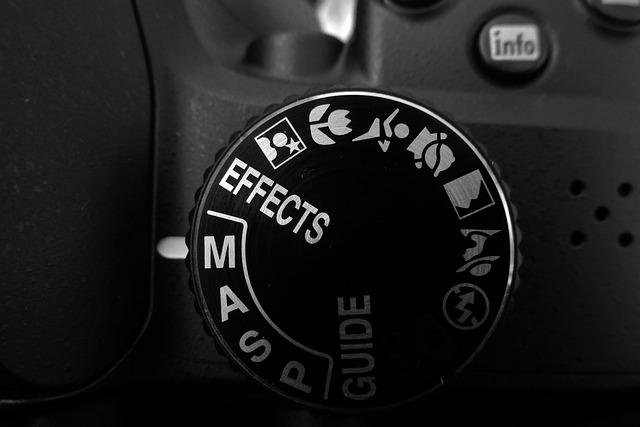

According to reports, the upcoming iOS 27 will likely feature a Siri Camera Mode. Imagine opening your camera app, pointing it at a complex piece of restaurant equipment or a foreign street sign, and having Siri provide instant, context-aware information in real-time. This isn’t just about reading text; it is about synthesizing visual data into actionable advice.

Key Features Expected in the Visual Overhaul:

- Contextual Recognition: Siri will recognize physical objects, landmarks, and documents with higher precision.

- Live AR Overlays: Real-time translations and UI interactions appearing directly over the camera feed.

- Privacy-First Processing: True to Apple’s brand, most visual analysis is expected to occur on-device to ensure user data remains secure.

- Integration with Apple Vision: Seamless handoffs between the iPhone and the Apple Vision Pro ecosystem.

Why Visual AI is the Next Big Move for Apple

Visual AI is no longer a futuristic concept; it is the infrastructure for the next generation of mobile computing.By leveraging the Neural Engine embedded in Apple’s A-series and M-series chips, the upgraded visual AI in iOS 27 aims to bridge the gap between digital content and the physical world.

When software can “see” what users see, the utility of the device expands exponentially. Whether it’s for accessibility-helping visually impaired users navigate their environments-or for productivity, the ability for your phone to understand its surroundings will turn the iPhone into an essential tool for navigation and decision-making.

| Feature | Benefit | Potential Use Case |

|---|---|---|

| siri Camera Mode | Instant Information | Identifying plants or identifying machine parts. |

| Depth Mapping | Spatial Awareness | Advanced room measurement for DIY projects. |

| Real-Time Translation | Global Connectivity | Translating street signs during travel. |

Benefits and Practical Tips for Early Adopters

If you are an enthusiast looking to maximize your experience once these features roll out,preparation is key. Here are some benefits of adopting these AI-forward workflows and tips on how to prepare your digital

You might also like:

- BT’s Strategic Rebound: Fewer Broadband Customer Losses Amid Competitive Challenges

- Glimpse: Bigger than half of of Washington clinicians cite mental-health dangers from cannabis exhaust

- Factual represent me I don’t beget Frankenstein.

- Sufferers lunge to search out estrogen patches as shortage worsens after US FDA champions utilize

- Asia’s Dominance in the Global Robotics Landscape